FilterMaintain an IoT Product

Three years ago, I pursued a dedicated IoT program to get more structured data. While IoT as a concept was not new, especially for those who work in automation, as I was working in the Building Automation field, IoT became part of the technological offerings of leading BAS manufacturers. Especially with the push for cloud services, including advanced analytics based on machine learning.

I won't dive into the history of IoT. I want to share an exciting project I worked on as the capstone to completing my IoT program. This share aims to show that, for those who might be interested, anyone is capable nowadays with some extra effort to work on, design, and build an IoT idea. There are many startups within the IoT space. I recommend you search and read about different concepts and their applications in various industries.

Now back to the project, I had two ideas for it:

- The first one was related to monitoring cold rooms and medical fridges at hospitals and medical research centers, connected to the internet, and offering analytics on the reported temperature and, most importantly, on temperatures rising above the permitted limits.

- The second idea was about the maintenance gap for filter changing or cleaning. That was the idea I decided to work on. I named the product FilterMaintain.

I was interested in the FilterMaintain concept since, during my work, customers complained about issues with their air handling units' filter monitoring and how can the automation system solve the problem by different alarming methodologies. Those who work in the Arab Gulf know well that air filters (especially on the outdoor side) are quickly becoming dirty due to high sand particles and humidity in specific periods of the year.

I realized that an air-filter maintenance time gap diminishes indoor air quality and increases the energy usage of air handling equipment in buildings. In some cases, it places additional strain on the Fan motors, potentially, over time, burning them out and shutting down the whole unit. The gap happens when filter maintenance calls are delayed until the planned preventative schedule is due. Or, after high dirt filter readings are reported to the building operators via the building management system, the business process of getting the maintenance team at the site takes not less than 24 hours.

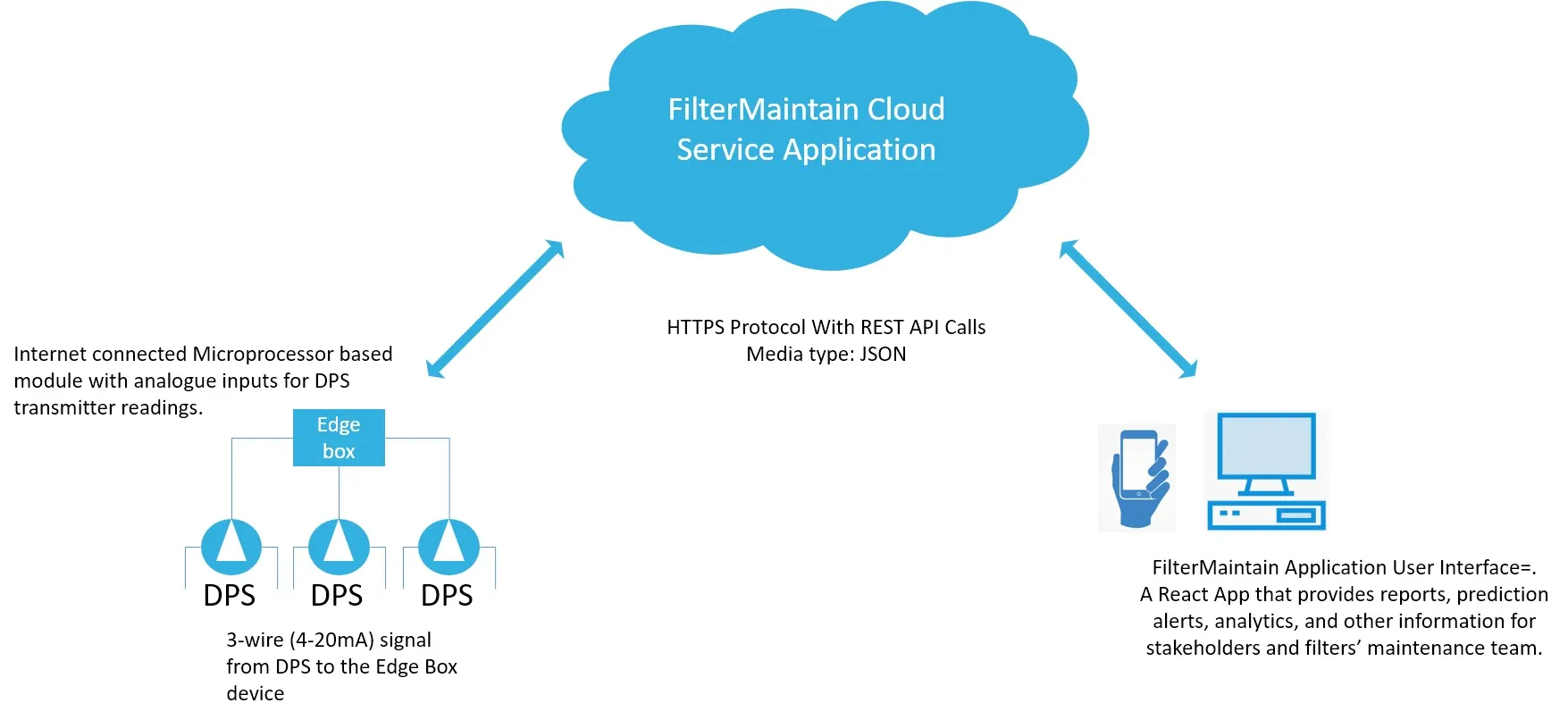

I drafted a solution to solve the air filter maintenance time gap to make the whole operation efficient. By using machine learning to recommend and predict the best time to call for filter maintenance automating warnings and alarms on behalf of the building owners directly to the maintenance team/company. The solution aimed to provide efficient maintenance schedules (without adversely affecting airflow and equipment efficiency), contribute to people's health, help reduce energy consumption, enhance the maintenance operating process, and save costs for building owners.

Beyond the basics of air filter status monitoring, there are two approaches to maintaining the Air filters, reactive and preventative. In reactive, the maintenance teams address the filter dirty alarm status. As preventive, the Team visits the site periodically, conducts a thorough inspection, and attends to the maintenance of the filters where needed.

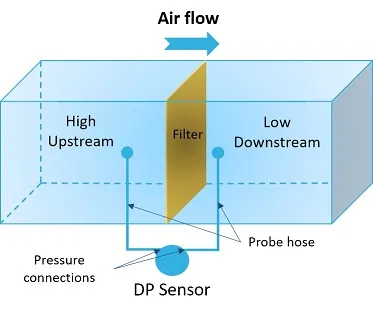

Before I get into details, I will give a quick overview of the basic concept of Air Filter Status monitoring for those unfamiliar with it. Filters are positioned to capture solids and particulates in the air stream. The filter obstructs the flow through the duct lowering the pressure on the downstream side. These effects may vary depending on the filter's media (the material used) that removes impurities. As the filter becomes clogged, the downstream pressure drops. It results in increased differential pressure readings (Pressure Loss) between the up and down streams known as ΔP (Delta-P).

When the ΔP readings reach the higher value where filter maintenance is required, as each filter manufacturer recommends, the system triggers an Alarm. Saturated filters may also begin to shed captured particles. The contaminants can escape into the process with the filter no longer functioning correctly. Proper monitoring of pressure drop is crucial to analyze filter status.

The Hardware

For the hardware, there are tens of controllers and computer systems options that you can use to build your IoT idea. I chose for the edge box the Raspberry Pi computer, specifically the Raspberry Pi 3 Model B+. My decision was based on specific criteria:

- Dealing with the data at the edge before sending it to the cloud application,

- Allows using the desired protocol and web service connectivity,

- Allows the implementation of specific functionalities and report mechanisms like error check, sensor connectivity check, and the overall edge system health status,

- Allows for remote configuration and commissioning if desired.

I needed an analog input module compatible with the Pi system for differential pressure input. I selected the Pi-SPi-8AI module from WidgetLords Electronics. It connects with Raspberry Pi through the General-Purpose Input Output (GPIO) port and provides 8 AI, configurable to mA (4-20mA) via a jumper at each input.

For the cloud infrastructure, I selected a Droplet from DigitalOcean, a Linux-based virtual machine that runs on top of virtualized hardware. The droplet hosts the cloud application server and other services as part of the system architecture.

The Software

Data in the Cloud

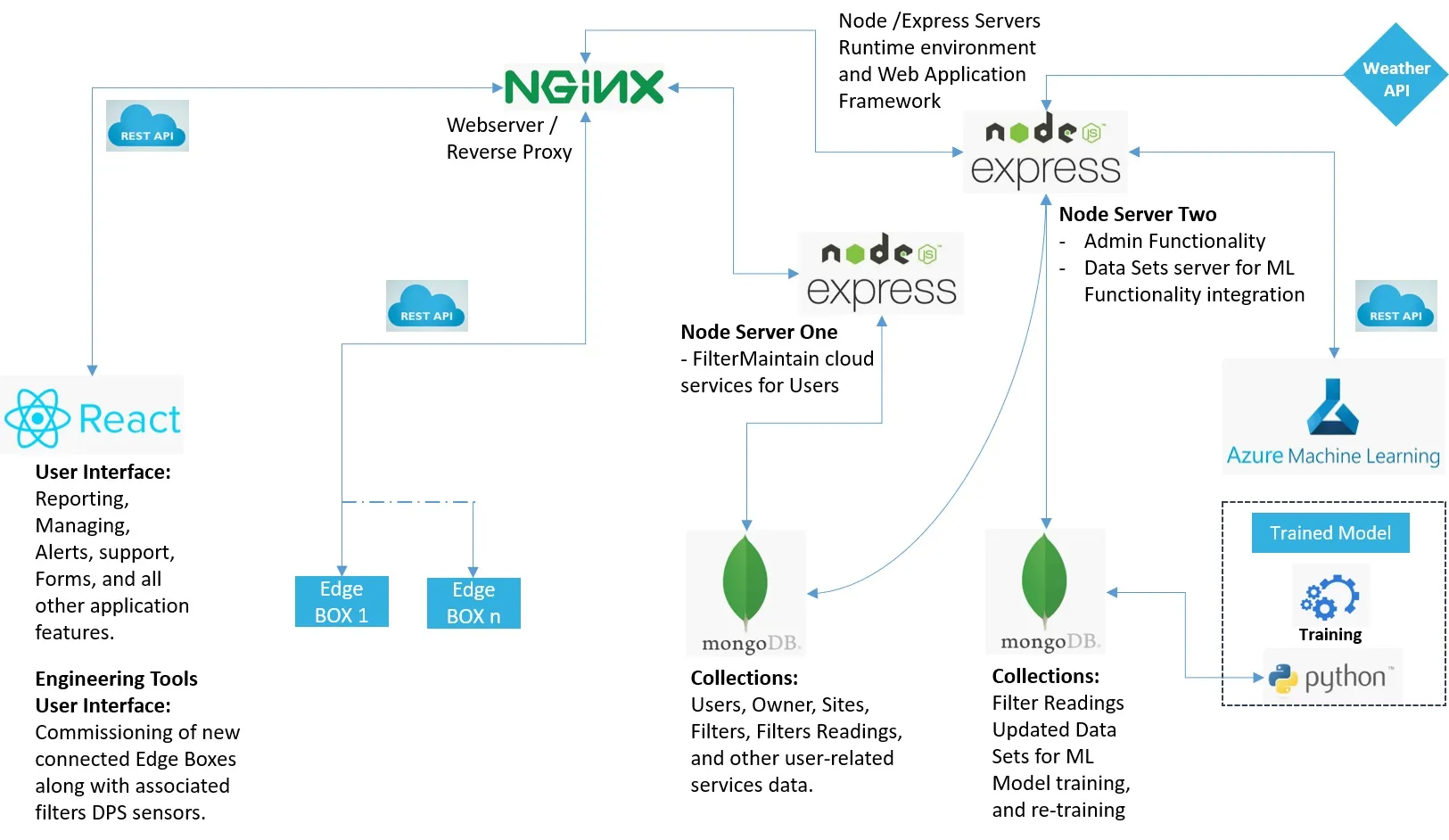

At the core of the cloud application, HTTP servers are built with Node.js. An asynchronous event-driven JavaScript runtime designed to build scalable network applications. There are two servers, one that handles the end user side of the application and one for administration, database management, and third-party services integration. I used the express Server, a web framework for Node.js, to build the web application faster and design the solution APIs.

With Node, I installed multiple packages that streamline some of the functionalities:

- Cross-origin resource sharing (CORS)

- BodyParser, and express middleware to handle and read HTTP post data

- Passport.js, an authentication middleware for Node.js

- Passport-jwt: A Passport strategy to authenticate endpoints using a JSON web token

- Axios, a Promise-based HTTP client for the browser and node.js

I used an Nginx server as a proxy server to be the load balancer for the FilterMaintain application and as an API gateway allowing the rerouting of the requests to the proper resource node.js provider.

The Database

After researching the best database option to go with, I selected MongoDB. It is a NoSQL type document database that stores data in JSON-like documents. I could deal efficiently with the data collected from DPSs, and pass them to the ML model to enhance the predictions. The Mongoose Node package was a straightforward package to work on it. I decided to split the dataflow between two separate DBs, one that handles the User-Related Data along with filters installed and filters readings, and the second to manage the archived real-time data collected from the site.

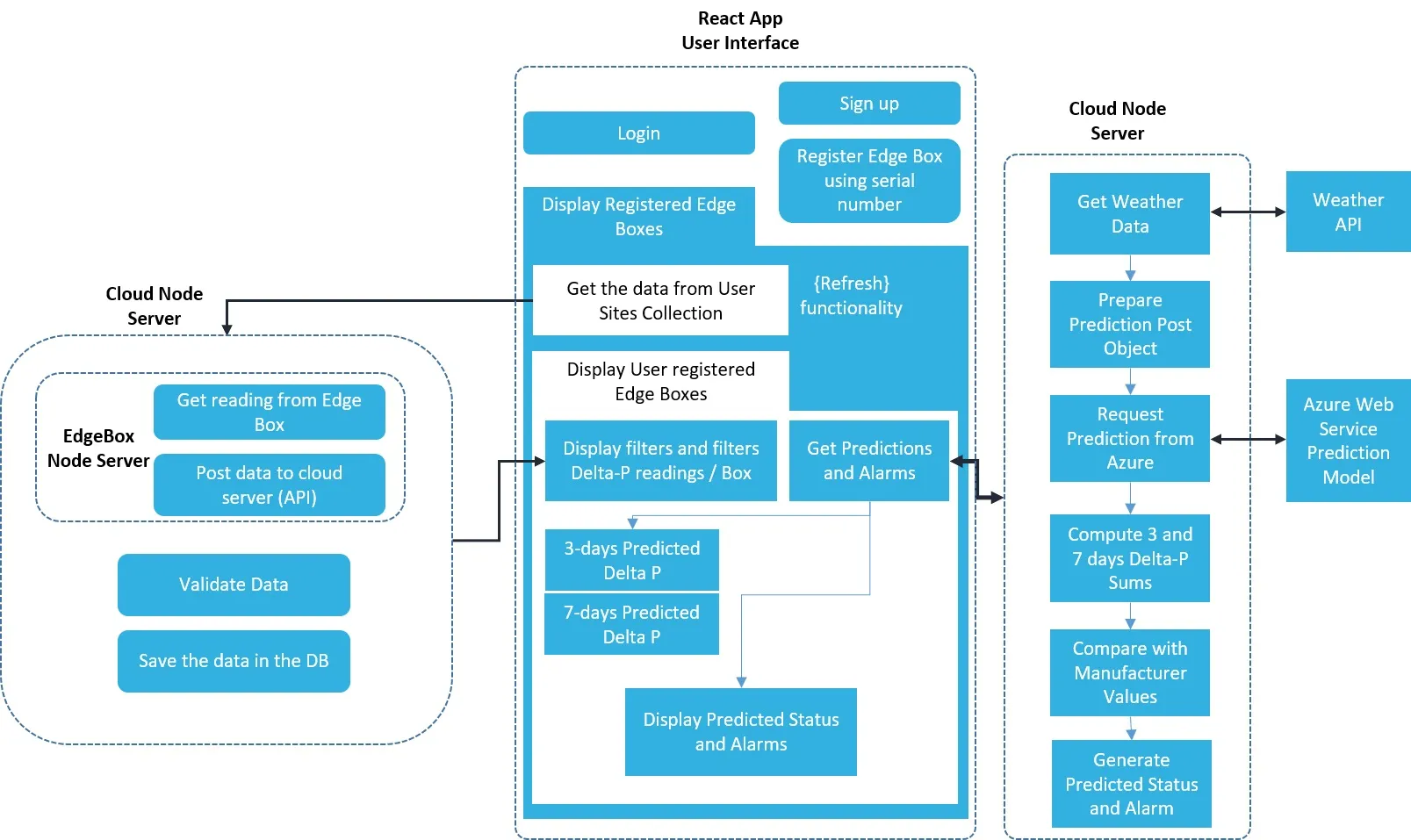

The Prediction Functionality

The filter maintenance prediction function for the FilterMaintain application is the cornerstone of the system's full functionality. It answers the main requirement efficiently: When Air filter most likely needs to be maintained?

Predicting when a filter serving a specific unit requires maintenance involves multiple variables to account for mainly:

- Filter size, cross-section, and technical specification

- The minimum efficiency reporting value (MERV)

- The application the filter is serving

- The weather and air quality (PM10 and PM2.5)

For that, I selected the Multiple Features Linear Regression algorithm for the predictions. The prediction equation becomes:

y = m1 * FilterModel + m2 * PM2.5value + m3 * PM10value + b

Where "y" is the dependent variable (represents Differential Pressure Reading), m1, m2, m3 are the coefficients, b is the intercept, and the values of each feature are the independent variables.

Third-party API Integration

The weather reading is an essential variable for the application and the prediction model. I selected IQAir AirVisual weather API. It gives seven days of forecast values for the air quality index with fundamental pollutant concentration values. I deployed the Machine Learning Model at Azure ML.

User Interface

The interface represents the application's front end. It covers all the features needed to get a user on board, commission the edge box, and register the filters and associated DPS. React.js library was my selection. I built the user interface, moved the built files into the droplet as a web application, and made some configurations for the Nginx proxy routing to the FilterMaintain domain.

There are many details in this project, including security considerations. I tried to summarize the key points. Also, I could have used other communication protocols and tools to construct the whole IoT concept. Many of you might argue about different approaches, and I might have flaws in this concept. Again, my share aims to encourage you to take an extra step and build your own IoT concept.

Despite the complexity of any solution, by breaking it into pieces and making the product from the bottom up with a clear vision of the end version and its value, you can achieve beautiful results. Just imagine the information and analysis that could be done with the collected data and filter performance over time.

Anything is possible.

Thank you for being here and reading this piece.